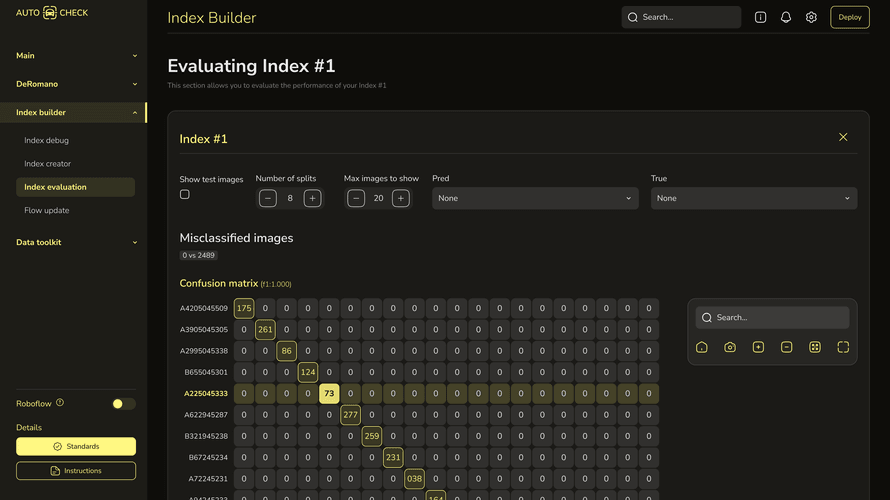

The decision is based on how much of the problem is model-related versus system-related.

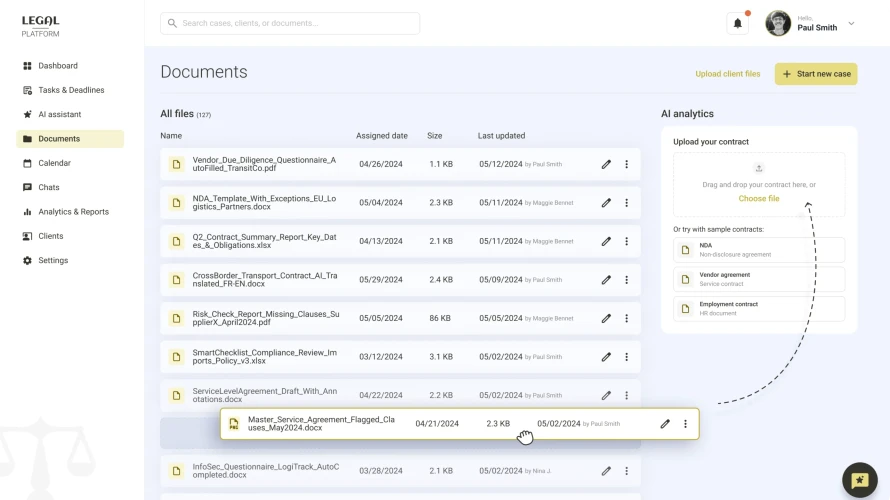

We start by evaluating model performance in context, not in isolation. This includes checking input quality, prompt or feature design, consistency of outputs, and how results are used in workflows. In many cases, issues attributed to the model are caused by missing context, poor data handling, or unclear evaluation criteria.

If the model performs adequately with proper inputs and constraints, we keep it and redesign the surrounding system. This may include adding retrieval mechanisms, restructuring prompts, or introducing validation layers.

If the model cannot meet accuracy or latency requirements even under correct conditions, we replace or combine it with alternatives, such as smaller specialized models, ensemble approaches, or routing strategies.