Modern development teams rely on AI security tools to scan code, flag vulnerabilities, and suggest fixes during development. These tools promise faster detection and reduced manual effort. At the same time, security incidents continue to increase in frequency and sophistication.

Attackers no longer take hours to move through systems. CrowdStrike reports an average breakout time of 29 minutes, with some attacks unfolding in seconds, which compresses response time to near zero.

This creates a clear gap between tooling capability and real outcomes. If AI security tools are improving detection and automation, why does risk remain high?

The answer lies in how secure code is built today. Security is not defined by a single tool or scan. It depends on how teams manage noise, prioritize issues, and connect development with runtime environments – and that is exactly what we will be discussing in this article with Viktoryia Makarskaya, Data Science expert at Aristek.

What risks do unsecured AI systems create?

Before we look at how AI security tools help development teams, it is important to clarify one point. AI plays two roles in modern systems. It helps secure applications, but it also introduces new types of risk.

Traditional security focuses on vulnerabilities in code and infrastructure. AI systems add another layer. They rely on data, models, and external dependencies, which creates risks that do not exist in standard software.

This means teams are now dealing with two parallel challenges:

- securing applications with AI tools

- securing the AI systems themselves

Some AI systems introduce risk. Others help detect and reduce it. In practice, both exist in the same environment but behave differently from traditional software vulnerabilities.

These risks often develop gradually, remain hidden during testing, and surface only under real-world conditions. They affect not only system security, but also business decisions, compliance posture, and operational stability – and this is now a priority concern for organizations operating at scale.

Understanding these risks requires looking beyond definitions – so let’s review the most significant ones in more detail.

1. Data poisoning

Data poisoning affects the training phase of AI systems. Attackers or low-quality data sources introduce manipulated or biased data into training pipelines. Unlike traditional attacks, this does not immediately break the system. Instead, it shifts the model’s behavior over time.

For example, a fraud detection model trained on manipulated transaction data may start allowing suspicious activity. A recommendation system may begin favoring certain outputs due to biased inputs. These changes are often subtle and difficult to trace back to the source.

The key challenge is visibility. Once corrupted data enters the pipeline, it becomes part of the model’s logic. Detection often requires comparing outputs over time or auditing training datasets, which many teams do not do consistently.

2. Model theft and IP leakage

AI models are valuable assets. They encapsulate proprietary data, training effort, and domain knowledge. When exposed through APIs or weak access controls, attackers can extract model behavior by repeatedly querying it. Over time, they can reconstruct a functionally similar model.

Model extraction attacks have been demonstrated across multiple domains, including natural language processing and computer vision systems. In practice, attackers do not need full access. They only need enough interaction to approximate outputs.

The impact is twofold. First, organizations lose intellectual property. Second, attackers gain insight into how the model behaves, which makes it easier to bypass or manipulate.

3. Adversarial attacks

Adversarial attacks target how models interpret input data. Attackers craft inputs that appear normal but are designed to trigger incorrect outputs. These inputs exploit weaknesses in how models generalize patterns.

For example, a small change in input data can cause a classification model to mislabel an object or a security system to misidentify a user. In fraud systems, attackers can manipulate transaction features to avoid detection without raising obvious red flags.

These attacks are difficult to detect because they do not rely on system compromise. The system behaves as designed, but the input has been engineered to produce a specific outcome.

The NIST identifies adversarial manipulation as a core risk in AI systems, emphasizing that robustness must be tested under adversarial conditions, not only standard inputs.

4. AI supply chain risks

AI systems depend heavily on external components. These include pre-trained models, datasets, open-source libraries, and third-party APIs. Each dependency introduces a potential entry point for vulnerabilities.

A model trained on unverified data may include hidden biases or malicious patterns. A third-party library may contain security flaws. Licensing issues can also create legal exposure if datasets are used improperly.

These risks are difficult to manage because they originate outside the organization. Teams often trust third-party components without full visibility into how they were built or validated.

5. Operational and availability risks

AI systems depend on high computational capacity and complex infrastructure. This makes them sensitive to traffic spikes, malformed inputs, and targeted abuse. Unlike traditional applications, performance degradation often affects output quality before a system fully fails. This creates a risk where systems continue operating but produce unreliable results.

For example, an AI service under heavy load may return delayed or inconsistent outputs. In systems that support decision-making, this can lead to incorrect recommendations or failed transactions. These issues are harder to detect because the system does not go offline. It continues operating with degraded performance.

Attackers increasingly exploit this behavior. Instead of breaching systems directly, they target resource consumption. By sending large volumes of requests or triggering compute-intensive operations, they can exhaust infrastructure capacity. This leads to slowdowns, increased costs, or service disruption.

Data from Cloudflare shows that automated traffic accounts for a significant share of internet activity, with bots responsible for a large portion of requests in many environments. A growing percentage of this traffic is malicious, including attempts to overload APIs and AI-driven services

AI risks are interconnected. A compromised dataset can affect model behavior. A stolen model can be used to identify weaknesses. A degraded system can lead to incorrect outputs.

These issues do not exist in isolation. It is no longer only about uptime. It also includes performance stability, resilience under adversarial input, and the ability to handle both malicious traffic and unexpected system behavior.

Why AI security is a business enabler

Who does not trust numbers when it comes to risk and impact?

AI security’s value shows up clearly in measurable outcomes. Organizations that invest in securing AI systems see lower financial exposure, stronger compliance readiness, and faster adoption of new technologies.

The difference between secure and unprepared organizations is already visible in real data.

- Reduced financial and legal risk

Security incidents remain expensive and long-lasting. The global average cost of a data breach is $4.44 million, and breaches take an average of 241 days to identify and contain. Organizations that use AI and automation in security reduce breach costs by up to $1.9 million per incident, which is a 34% cost reduction compared to those without it.

- Regulatory and compliance readiness

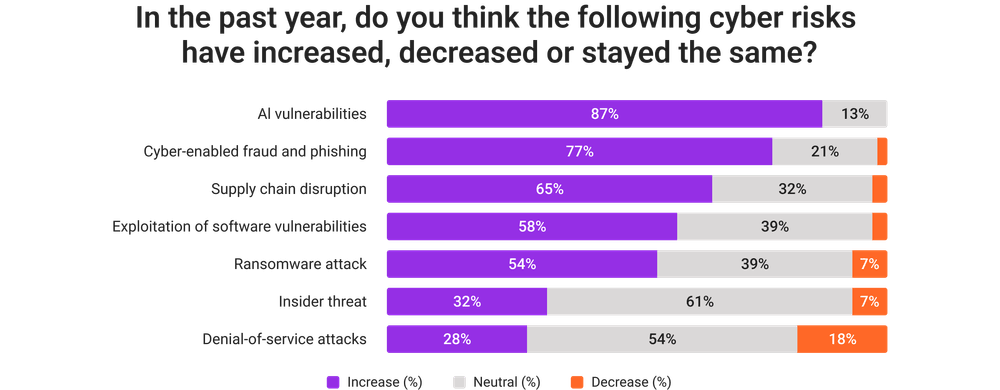

As the WEF’s Global Cybersecurity Outlook states, 87% of organizations identify AI-related vulnerabilities as the fastest-growing cyber risk.

At the same time, only 64% of organizations have processes to assess AI security before deployment, although this has improved from 37% the previous year. This gap indicates many companies are unprepared for regulatory scrutiny. Early governance implementation gives organizations a clear advantage during audits and compliance checks. - Trust and reputation protection

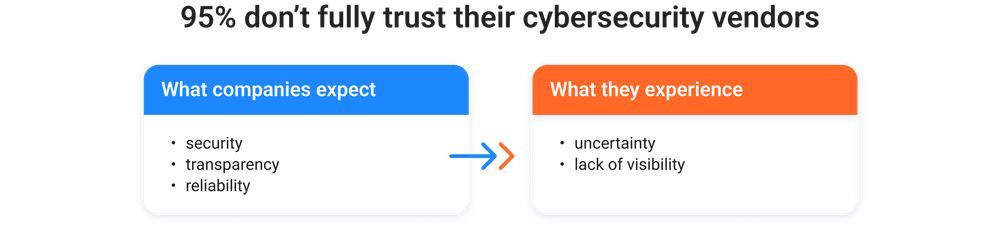

Trust is a major barrier to AI adoption. The World Economic Forum research shows that 39% of organizations cite uncertainty about AI risk as a key barrier, and 41% highlight the need for human oversight. At the same time, 95% of organizations report they do not fully trust their cybersecurity vendors.

Secure and transparent AI systems help close this gap. Organizations that can demonstrate control and reliability gain stronger trust from customers and partners.

- Operational stability

Most organizations are still not operationally resilient. Around 63% of organizations fall into a high-risk “exposed” category, meaning their security capabilities are insufficient for modern threats. In addition, 83% lack a secure cloud foundation, which increases the likelihood of outages and misconfigurations.AI systems depend heavily on stable infrastructure. Strong security practices reduce downtime, prevent cascading failures, and improve system reliability under load.

- Faster and safer AI adoptionAI adoption is already widespread. 77% of organizations are using AI in cybersecurity, mainly for detection and response. At the same time, adoption outpaces governance. 98% of organizations are integrating generative AI, but only 66% conduct regular security assessments, and 97% of organizations that experienced AI-related attacks lacked proper controls.

These numbers highlight a clear pattern. AI security directly influences cost, trust, resilience, and the speed of innovation.

However, no matter how compelling the arguments in favor of AI adoption may be, they are often accompanied by common misconceptions.

We’ve already broken down some of the most persistent myths around AI and LLM security and explained why they don’t hold up in real-world scenarios.

How AI security tools address real development problems

AI security tools are not interchangeable. Each one is designed to solve a specific bottleneck in the development or security process. Some focus on detection, others on prioritization, and others on remediation or response.

However, understanding where a tool fits is more important than understanding what it does in isolation.

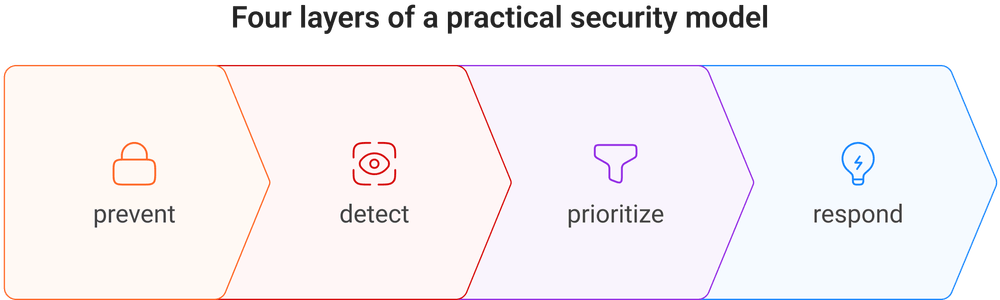

Every tool in this space aligns with a particular stage of the lifecycle. Code-level tools help developers catch issues early. Cloud and runtime tools identify risks that only appear in production. SOC platforms focus on triage and response. The value comes from how these tools complement each other, not from any single capability.

Let’s break down where different AI security tools truly excel and in which situations they make the biggest difference.

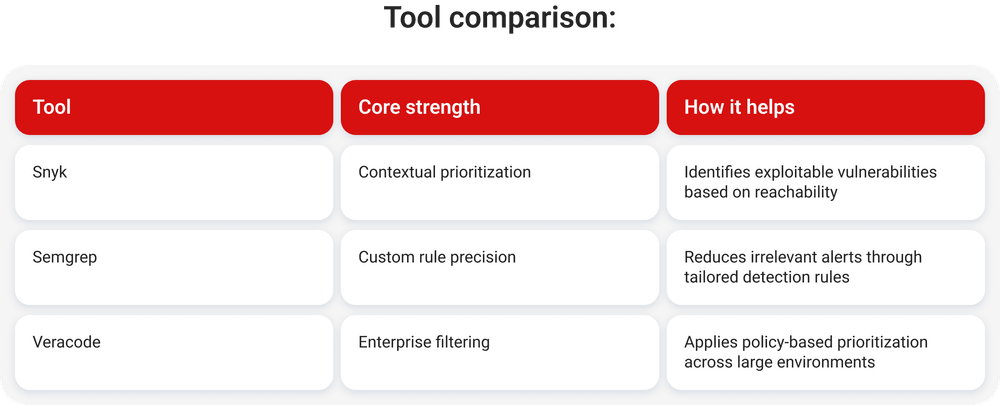

Reducing noise and improving signal quality

Security tools generate large volumes of alerts. Many findings are not exploitable in practice, which slows teams down and reduces trust in results. IBM highlights alert fatigue as a major issue affecting response efficiency.

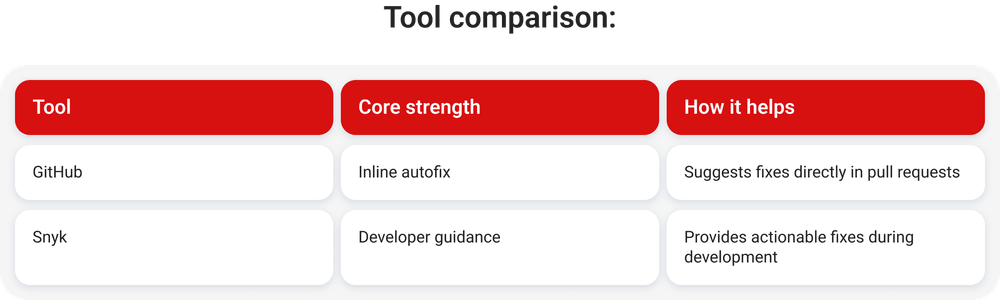

Embedding security into developer workflows

Security tools often fail when they interrupt development. AI integrates remediation directly into coding environments.

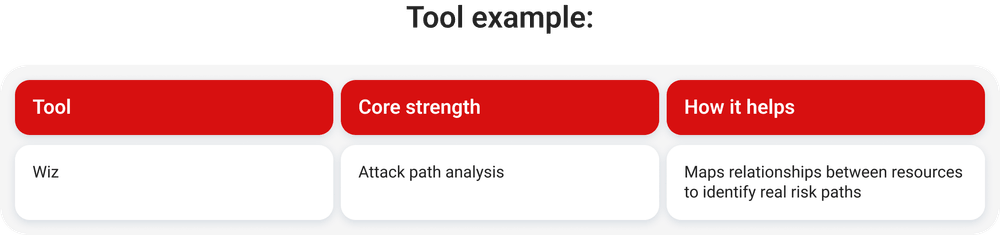

Understanding cloud and runtime risk

Applications that pass code checks can still be exposed in production. Cloudflare reports attackers increasingly target misconfigurations and identity systems.

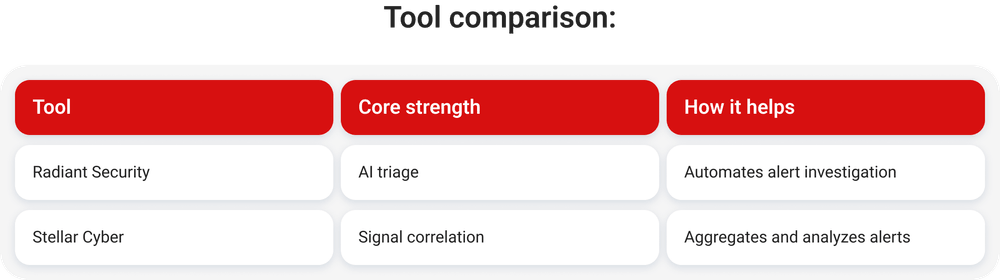

Reducing SOC alert overload

Imagine trying to investigate a real threat while thousands of alarms are going off at the same time. That is how modern SOCs (Security Operations Centers) operate.

Security teams process alerts from multiple systems, each generating its own signals and priorities. The volume quickly becomes unmanageable, and manual triage does not scale. Analysts spend significant time reviewing low-priority or duplicate alerts, which slows down response to actual threats.

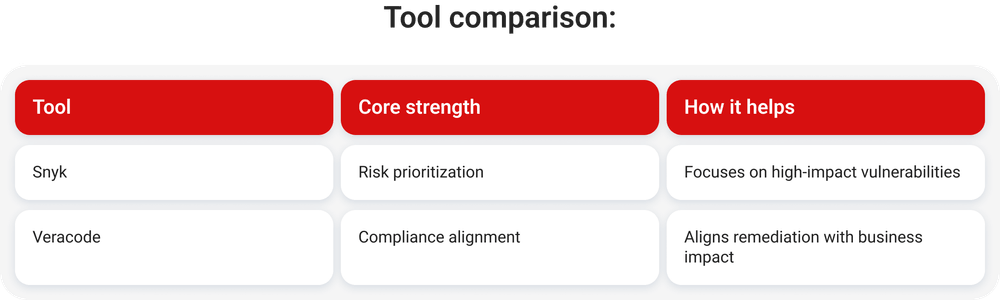

Managing vulnerability backlogs

Detection now far outpaces remediation. Modern security programs are highly effective at finding vulnerabilities, but fixing them remains a bottleneck.

Recent industry data shows the scale of the problem. 82% of organizations are dealing with security debt, meaning unresolved vulnerabilities continue to accumulate faster than teams can eliminate them.

Even more concerning, 60% of organizations carry critical security debt, which includes high-risk flaws that can be exploited in a real-world attack.

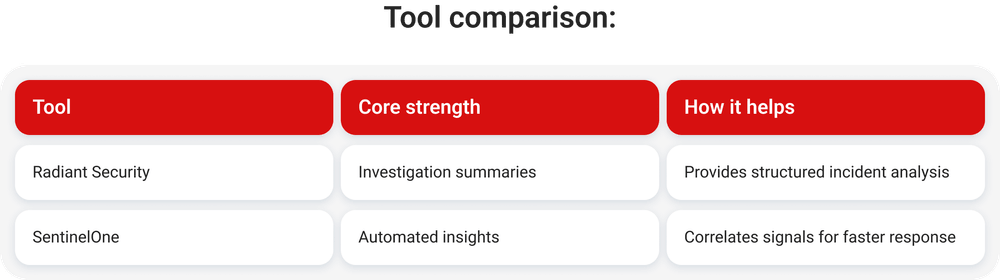

Accelerating incident investigation

Do you think everything is resolved once a security breach is detected? In reality, that is where the most time-consuming part begins.

After detection, teams need to understand what happened, how the attack unfolded, what systems were affected, and whether the threat is still active. This process often involves analyzing logs, correlating signals across multiple tools, and reconstructing timelines. In many organizations, this can take hours or even days, especially when data is fragmented.

AI tools reduce this investigation time by summarizing events and highlighting the signals that matter.

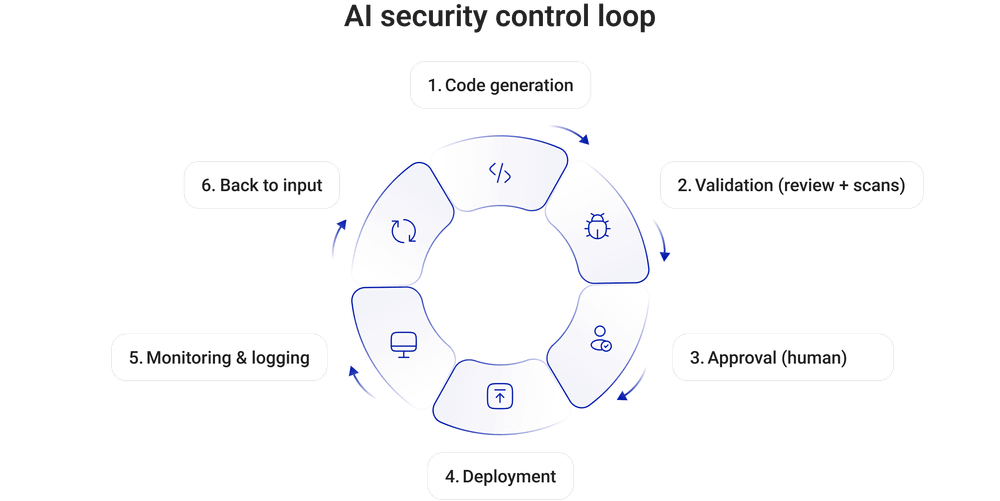

AI security principles for development teams

Organizations need clear principles to manage AI securely. Human accountability remains essential. AI assists decision-making, but engineers remain responsible for outcomes.

These recommendations are based on practical experience at the intersection of software development and AI, where security decisions need to align with how systems are actually built and deployed.

1. Human accountability must be enforced

AI can generate code, suggest fixes, and assist with decisions. Responsibility still belongs to engineers.

Every AI-generated change should have a clear owner. That owner must review, validate, and approve the output before it reaches production.

In practice, teams should treat AI output as external code:

- Require pull request review for all AI-generated changes

- Track who approved the change and why

- Maintain audit logs for AI-assisted contributions

Without ownership, accountability becomes a formality, and risks go unnoticed.

2. Security must stay inside the workflow

AI should not bypass existing security controls. It must integrate into the same processes that

govern manual development.

If AI speeds up coding but skips validation, it increases risk instead of reducing it.

Teams should enforce:

- Automated security scans in CI for all generated code

- Dependency checks for AI-suggested libraries

- Policy enforcement before merging code

This keeps security aligned with development speed.

3. Data protection requires clear boundaries

AI tools process prompts that may contain sensitive information. This creates a new exposure point that many teams underestimate.

Developers often paste code, logs, or configuration data into AI tools. In some cases, this includes credentials or internal APIs.

Teams must define strict rules for what can and cannot be shared:

- No credentials, tokens, or private keys in prompts

- No production data or customer information

- No internal system architecture details

They also need to review how vendors store and process this data. Many AI systems log interactions, which creates long-term exposure.

4. Output validation must be systematic

AI-generated code can look correct but still contain security flaws. These flaws often relate to logic, edge cases, or outdated practices.

Validation must happen at multiple levels:

- Peer review to assess logic and architecture

- Automated scanning to detect known vulnerabilities

- Testing to validate behavior under real conditions

For example, an AI-generated authentication flow may pass basic checks but still allow privilege

escalation. Without testing, this risk remains hidden.

5. Tool usage must be controlled

AI adoption often starts at the individual developer level. This creates visibility gaps and inconsistent security practices.

Organizations need a defined approach to tool usage:

- Maintain a list of approved AI tools

- Evaluate tools based on data handling and access control

- Monitor which tools are used across teams

Without this, shadow AI usage introduces risks that security teams cannot track or manage.

6. Transparency enables control

AI changes how code is created and modified. Teams need visibility into these changes to maintain

control over their systems.

This includes:

- Tracking where AI-generated code is used

- Logging interactions with AI tools where possible

- Linking AI-generated changes to specific commits and reviews

This becomes critical during incident investigation. If a vulnerability appears, teams need to know whether AI contributed to it.

These principles work only when they are embedded into daily workflows. AI security is not a separate layer or a one-time process. It is part of how code is written, reviewed, and deployed.

AI governance framework for secure AI development

AI governance defines how organizations control, monitor, and take responsibility for the use of AI across development and operations. It sets clear rules for how AI tools are selected, how they process data, and how their outputs are validated before reaching production systems.

From an executive perspective, the question is whether AI is used in a controlled and accountable way. Without governance, AI introduces risks that are difficult to detect and even harder to manage, including data exposure, compliance violations, and unpredictable system behavior – no matter how clearly development’s principles were defined.

In collaboration with Aristek’s security team, we have put together a set of recommendations based on real-world experience.

Define ownership across teams

AI security requires coordination across security, engineering, and infrastructure teams. Each group covers a different part of the lifecycle and has distinct responsibilities.

Security teams focus on control and risk:

- Approve AI tools before adoption

- Define security policies and guardrails

- Monitor AI-related risks and incidents

Engineering teams focus on implementation:

- Validate AI-generated code before merging

- Follow secure development practices in AI-assisted workflows

- Report issues related to AI usage

DevOps and infrastructure teams focus on operations:

- Secure AI infrastructure and integrations

- Maintain logging and monitoring systems

- Enforce security controls in CI and deployment pipelines

This structure reflects how AI is used in practice. Security spans development, deployment, and runtime.

A real example shows the impact of missing ownership. In 2026, an internal AI agent at Meta provided incorrect guidance that led to the exposure of sensitive internal data across teams, triggering a company-wide security alert.

In another case, an AI coding agent contributed to a production failure that caused a 13-hour outage in AWS Cost Explorer. It demonstrates how AI-generated changes can disrupt system availability if validation is skipped.

Control AI tool adoption

AI tools often enter through individual developers trying to increase speed. Without a structured approval process, organizations lose visibility and control.

Each tool should undergo evaluation before adoption. Key criteria include:

- Vendor security posture and compliance

- Data handling and storage practices

- Logging and monitoring capabilities

- Access control mechanisms

Approved tools should be documented in a central registry. This creates clarity across teams and reduces unauthorized usage.

Industry data confirms the scale of this issue. The abovementioned WEF report states that a significant portion of employees use AI tools without formal approval, which creates security blind spots and increases exposure to data leakage.

Monitor AI usage in practice

Once AI tools are in use, visibility becomes critical. Teams need to understand how AI affects development workflows and production systems.

Effective monitoring includes:

- Logging interactions with AI tools where possible

- Tracking AI-generated code in repositories

- Monitoring prompt usage for sensitive data exposure

- Identifying unusual or risky usage patterns

This level of visibility is essential during incident investigation. Teams need to determine

whether AI contributed to a vulnerability and how it entered the system.

Audit and control continuously

AI governance requires continuous validation. Tools evolve, workflows change, and risks shift over time.

Regular audits should evaluate:

- Whether approved tools are used consistently

- Whether teams follow defined security practices

- Whether AI usage introduces new risks

This allows organizations to adjust controls before issues scale across systems.

AI governance is becoming a core part of how organizations operate. It connects technology decisions with business risk and accountability. Without it, AI adoption creates hidden exposure that grows over time. With it, organizations can scale AI systems in a controlled way, maintain visibility across teams, and align innovation with risk management.

What comes next

AI will continue to improve automation and remediation. Gartner identifies autonomous systems as a key trend.

Complexity will continue to grow. Security will depend on how teams combine tools, processes, and governance.

AI doesn’t become useful on its own. It becomes useful when it fits how your team builds, reviews, and delivers software. Results depend on how well AI is integrated into workflows, how outputs are validated, and how decisions are controlled across the development process.

At Aristek, we support this in practice. We help teams structure how AI is used in development, define where it adds value, and set up processes that keep quality and accountability in place. If you’re exploring how AI could fit into your development workflows or want to refine your current approach, feel free to reach out and discuss your case.