AI-Driven Ground Handling Intelligence Solution for a Leading Logistics Provider

A leading logistics provider engaged Aristek to implement AI-based flight delay prediction in ground handling. Early analysis revealed the need for a structured, reliable data foundation before modeling.

Key achievements

| 200+ | engineered time-series features per turnaround |

| 100M+ | event records consolidated into an enterprise-grade AI-ready dataset |

| >95% | event-to-flight mapping accuracy |

Project scope

The project followed a structured, four-phase delivery approach to ensure technical stability and measurable validation.

Discovery & data mapping

We began by inventorying all relevant data sources, assessing data quality and schema consistency, and mapping operational processes to business KPIs.

This phase included defining the delay prediction use case (e.g., identifying flights at risk of turnaround delays based on historical operations, weather, and resource data).

Outcome: Clear understanding of workflows, mapped datasets, defined KPIs, and identified data gaps.

Domain data modeling

We designed a unified Ground Handling Data Model linking core entities such as:

- Flight

- OperationEvent

- EquipmentTelemetry

- EmployeeShift

- CargoShipment / ULD

This model created a consistent operational timeline across systems, enabling accurate feature engineering for delay prediction and future optimization use cases.

Outcome: A structured domain model ready to support ML pipelines.

Data ingestion & consolidation

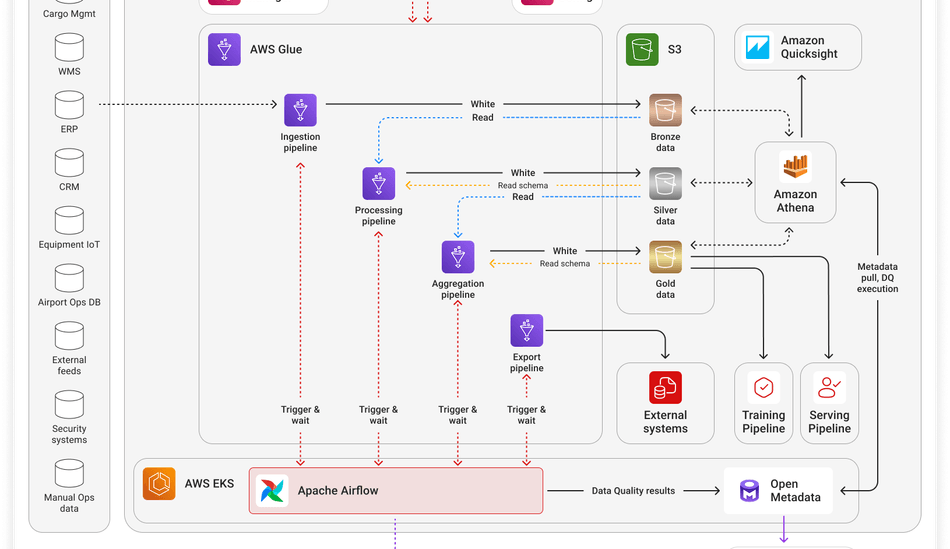

We connected operational systems, IoT streams, and external feeds into a scalable cloud architecture using a layered data design:

Bronze (landing layer): Raw ingested batch and streaming data stored with source metadata and timestamps.

Silver (curated layer): Cleaned, normalized, and structured datasets.

Gold (analytics layer): Aggregated, joined datasets ready for ML training and analysis.

Where required, a feature store was introduced to support reproducible and real-time inference.

This separation ensured reproducibility, governance, and decoupling of ingestion from modeling. As a result, we got a consolidated, staged raw-to-curated repository ready for ML development.

Proof of Concept (PoC)

With validated and structured data in place, we implemented a pilot ML pipeline for delay probability prediction and Turnaround Time (TAT) forecasting.

The model was evaluated using time-based validation to reflect real operational conditions. We validated technical feasibility and defined a production-ready architecture for scaling.

In the end, the PoC demonstrated strong predictive reliability and identified key contributors to delays, such as:

- Resource contention density

- Late inbound aircraft

- Shift transition overlaps

- Weather variability

- Equipment allocation saturation

How it works

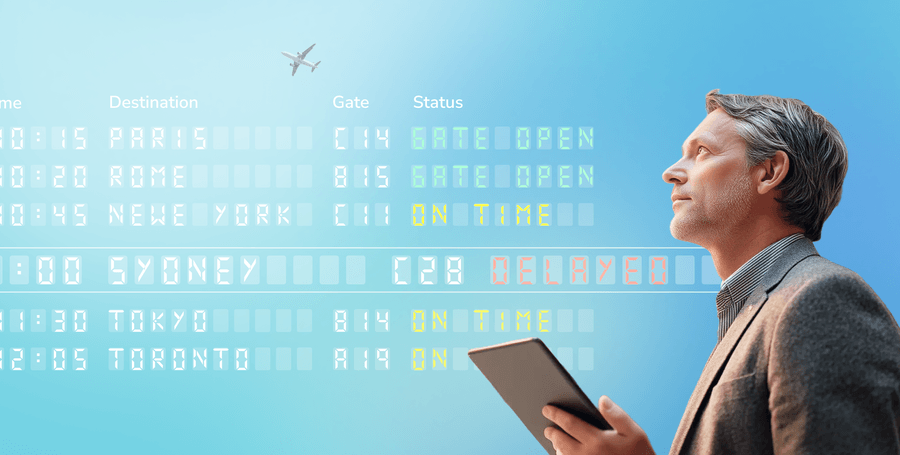

After data consolidation and ML deployment, the pilot AI operates as follows:

Operational events are ingested and standardized in near real-time.

Feature pipelines transform raw events into predictive signals.

The ML model generates delay probabilities and forecasts.

Supervisors take action, and feedback is logged for retraining.

Tools & technologies

Key takeaways

The project highlighted that AI success depends on data readiness and thoughtful architectural design. A structured data foundation proved more critical than the model itself.

Through disciplined data assessment, domain modeling, and pipeline architecture design, we established a production-ready framework built for scalability. What began as a pilot resulted in a reliable, governed, and extensible data backbone.

The collaboration continues with:

Real-time streaming ingestion for continuous prediction refresh | |

Automated drift detection and controlled retraining | |

Expansion to additional operational datasets | |

Production hardening of monitoring and governance controls |